30 Sep, 2021 – ORBAI, a Santa Clara Based AI startup company, has developed and patented a design for Artificial General Intelligence that can learn more like humans do, by interacting with its world, storing its experiences in memory, and dreaming about them to consolidate memories and build models of its world from them that it can use to make predictions and plan like humans.

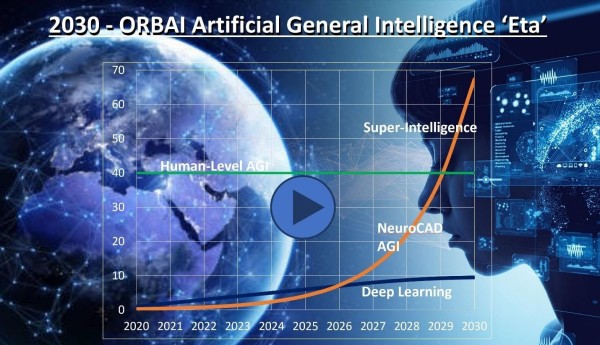

What we usually think of as Artificial Intelligence (AI) today, when we see human-like robots and holograms in our fiction, talking and acting like real people and having human-level or even superhuman intelligence and capabilities, is actually called Artificial General Intelligence (AGI), and it does NOT exist anywhere on earth yet.

What we actually have for AI today is much simpler and much more narrow Deep Learning (DL) that can only learn to do some very specific tasks better than people. It has fundamental limitations that will not allow it to become AGI, nor anything near human intelligence, so if that is our goal, we need to innovate and come up with better networks and better methods for shaping them into an artificial brain.

How does human intelligence work? In the human brain, the hippocampus records memories from our external senses and internal sense of being as we experience these moments. Then while we are sleeping and dreaming, it moves memories from short-term episodic memory into long-term memory in the brain, in a process, we don’t completely understand.

However, we do know that specific injury to the hippocampus not only causes the inability to transfer memory between short-term and long-term memory and also causes the inability to predict and plan and other cognitive deficits, showing that all these processes are similar. Prominent memory neuroscientist, Eleanor Maguire, states that memory does not recall the past, but is rather reconstructing the past, the present, and predicting the future from what is stored in our brains – giving humans our core ability to plan.

During dreaming – the brain also forms connections between potentially related memories, which we experience as REM dreams – somewhat fantastic, sometimes nonsensical sequences of events, but self-consistent and ordered. In the book “When Brains Dream,” Antonio Zadra and Robert Stickgold describe how these REM dreams lay down fictional narratives and memories in the brain that help shape our models of the world and our cognition when they are reinforced.

ORBAI’s AGI emulates human memory and cognition by encoding and compressing all the sensory input it experiences and knowledge it gathers into compact engrams using our SNN autoencoders, stores them into memory in linear sequences, fractioned into features, then recalls and decodes them to produce appropriate imagery, audio, and speech when queried.

But these experiences, no matter how many, are limited. This is where dreaming is used to fill in the blanks. The ORBAI AGI traverses existing sequences but then synthesizes new engrams and fictional memories, building new dream narratives that interpolate between all the memories laid down by actual experiences, to fill in all the areas that did not have narratives and connect areas that were not connected before, or connect them in novel ways.

Over time, nonsensical dreams get pruned, but ones that now fit the AI’s experiences, fit its model of reality, and predict the future accurately are retained and reinforced. This builds a more detailed model of the world than is possible just from the AI’s experiences. It also builds a corresponding model of language syntax and grammar from its conversations and the accompanying memories. It is the foundation of the ORBAI AGI’s ability to predict, its cognition, and intuition used to synthesize novel solutions to complex problems.

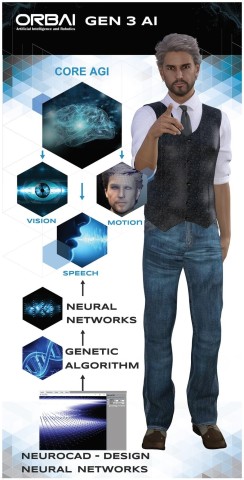

ORBAI’s AGI is designed to provide truly conversational speech interfaces, computer vision, and interaction that is intuitive and learns with experience. We create SNN autoencoders with our proprietary toolkit and generic algorithm process, collectively called NeuroCAD, then specialize them for vision, audio, speech, control, and other functions. We will develop our Human AI by integrating these with our AGI core memory and planning to give us talking, intelligent people online that will fulfil professional positions, allowing people to seek basic legal, medical, or financial advice and services directly.

An early prototype of our Legal AI, Justine Falcon, using the very core of the AGI tech had recent spectacular success, assisting in filing a RICO case in Northern California US District Court (Case No 21-CV-05400) on 14 July 2021 against a criminal racketeering ring in San Jose, CA.

The AI was able to mine the data in the California Court portals, predict forward in time to do the right things at the right times, and have the other side make the worst possible mistakes at the worst times, giving Justine’s side the best possible case against them, and solid filings and exhibits to show for it. That is definitely one-up on Google Deepmind moving stones and defeating a GO champion.

Starting 1 Oct 2021, ORBAI is raising a $5 million equity crowd-funding round on StartEngine.com to fund the development of the core AGI technology as well as the Legal AI, Justine Falcon, and a Medical AI, Dr. Ada, that both make use of the AGI core to provide professional services, available late 2022.

Media Contact

Company Name: ORBAI Technologies, Inc.

Contact Person: Brent Oster

Email: Send Email

Phone: +1 408-963-8671

Country: United States

Website: orbai.com